← back ·

What Language Leaves Behind

Language compresses thought. What gets lost?

Every thought you’ve ever communicated has been compressed.

Written and spoken language is a representation of human thought—one of many, alongside images, music, architecture, gesture, clothing. But unlike a painting or a melody, language carries an illusion of precision. We treat words as if they perfectly capture what we mean.

Piotr’s Substack is a reader-supported publication. To receive new posts and support my work, consider becoming a free or paid subscriber.

They don’t.

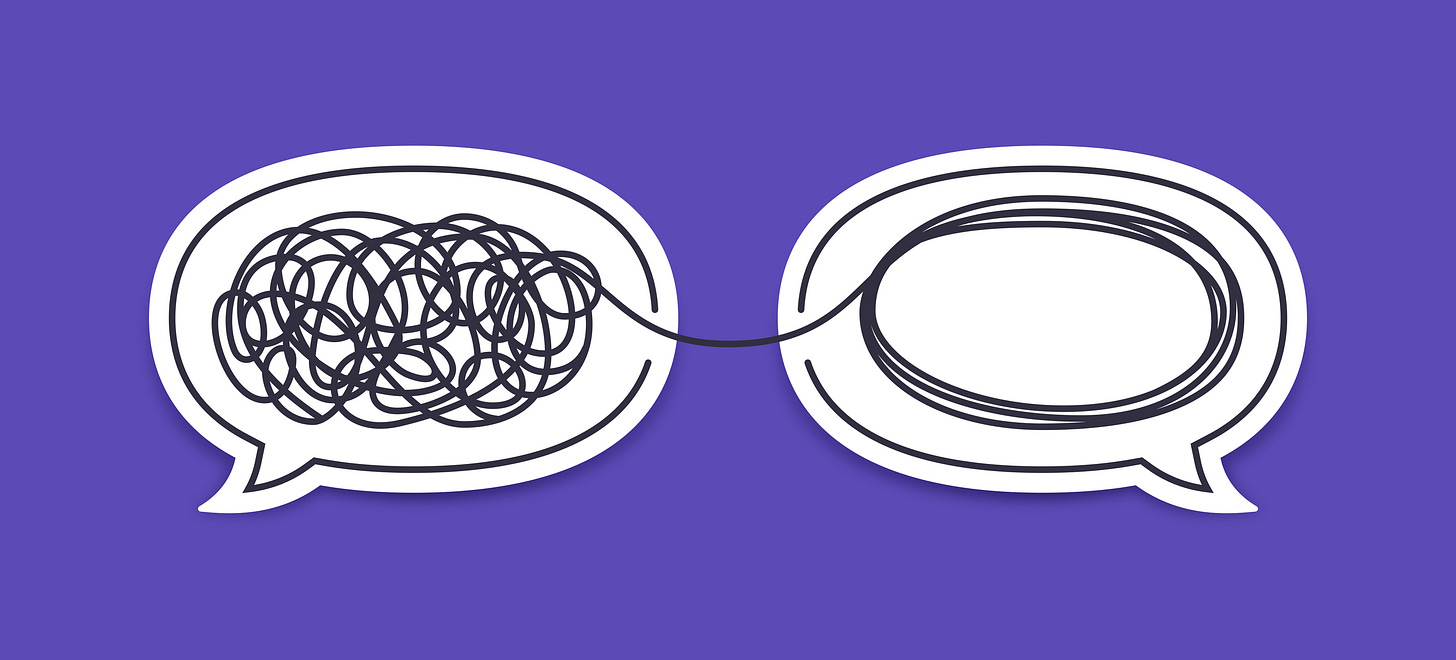

Language is a codec: it encodes the high-dimensional, tangled, contextual thing happening in your mind into a low-dimensional stream of symbols. And like every codec, it’s lossy. Information is discarded. Structure is flattened. Nuance bleeds away.

Worse, the decompression happens on the other end—in someone else’s mind, using their priors, their context, their understanding of what your words were supposed to mean.

This isn’t a flaw in how we use language. It’s a flaw in what language is.

Watching the Compression Happen

Summarization makes this visible.

Take the Wikipedia article on addition. If I asked you to summarize it in two sentences, you’d have to choose: which parts of your understanding deserve to survive the compression?

Here’s my instinctive attempt:

Summary 1: Addition is an arithmetic operation that combines two or more numbers to produce their total or sum, typically denoted with the + sign.

And here’s what I wrote when I deliberately reached for different information:

Summary 2: Addition is an operation defined for all kinds of numbers, is associative and commutative, and 0 is its identity element.

Same source. Same length constraint. Completely different outputs.

The difference isn’t in the facts—both are accurate. The difference is in which thoughts I chose to encode. My first summary emerged from resonance: the overlap between the webpage and my existing mental model. It felt like the “main point” because it matched what I already believed about addition.

My second summary required effort. I had to override my instincts and select information that wouldn’t naturally surface.

This is the compression in action. The thought “what addition means to me” is vast and associative. The two-sentence summary is a narrow channel. Something has to be thrown away.

The Illusion of Consensus

What’s remarkable is how reliably people throw away the same things.

I ran the same prompt through several LLMs:

Prompt: Summarize addition defined on this website https://en.wikipedia.org/wiki/Addition in 2 sentences

Claude 4.5: Addition is one of the four basic operations of arithmetic that combines two or more numbers (called addends or summands) to produce their total or sum, typically denoted with the plus sign (+).

GPT 5.0: Addition is a mathematical operation that combines two numbers, called addends, to produce a sum. It is one of the basic arithmetic operations and is widely used in various fields of mathematics, science, and everyday life.

Gemini 3.0: Addition, typically symbolized by the plus sign (+), is one of the four core arithmetic operations, where the sum of two whole numbers represents the total when those values are combined. It is a fundamental concept in mathematics that is both commutative and associative.

All three converge on essentially the same framing as my instinctive summary. They share a prior—trained into them by human preferences—about what “important” means, what “summary” means, what deserves to survive the compression.

This feels like agreement. It’s actually shared bias.

The Information That Doesn’t Exist

Here are two more summaries of that same Wikipedia page:

Summary 3: The webpage describes a mathematical operation that children as young as 5 months, and animals of some species, are capable of performing.

Summary 4: The webpage mentions the word “three” 16 times and the word “addition” 181 times.

Both are accurate. Both are valid compressions. And both probably feel wrong to you—like they’ve violated some unspoken rule about what a summary should contain.

That feeling of wrongness? That’s your prior asserting itself. Your mental codec has a built-in definition of “main fact,” shaped by culture, education, and a thousand past encounters with the word “summary.”

The LLMs share that prior because they were trained on human outputs. The result is a systematic pattern in what gets discarded: not random noise, but structured absence. Statisticians call this “missing not at random.” The gaps in a summary aren’t accidents. They’re artifacts of the compression algorithm—which is to say, artifacts of how we collectively decided to encode thought into language.

The Receiver’s Problem

But compression is only half the story.

When you read my summary of addition, you’re not recovering my original thought. You’re reconstructing something in your own mind, using your own priors. The same sentence means different things to a mathematician, a kindergarten teacher, and someone who’s never heard the word “commutative.”

Language doesn’t transmit meaning. It transmits symbols that prompt the receiver to construct meaning locally. The fidelity of that reconstruction depends on how well the sender’s and receiver’s codecs align.

Usually, we don’t notice the drift. Shared culture, shared context, shared assumptions—these create enough overlap that communication feels seamless. But the overlap is never complete. Every sentence you speak arrives slightly different than it left.

What This Means

Language is not a window into thought. It’s a compression artifact.

When we summarize, explain, argue, or describe, we’re not transferring our mental states intact. We’re lossy-encoding them into a symbolic stream, hoping the receiver’s decompression produces something close enough to be useful.

Sometimes it does. Sometimes what you meant and what I understood are close enough that we never notice the gap.

But the gap is always there.

Every act of communication is an act of interpretation—twice over. First by the speaker, who must choose what survives the encoding. Then by the listener, who must reconstruct meaning from the symbols that remain.

The question is never “did I say it clearly?” The question is: whose priors are doing the work?

Piotr’s Substack is a reader-supported publication. To receive new posts and support my work, consider becoming a free or paid subscriber.